Perception, Not Process: Why AI Is Converging on Geometry

What we call feedback is a cross-section of something already complete, viewed through the narrow slit of temporal perception. This paper argues that the next leap in AI isn't computational, it's geometric. The structure was never missing. Only our perception of it was.

Publication Record: This document has been cryptographically timestamped and recorded on blockchain to establish immutable proof of authorship and publication date.

- Author: Nicole Flynn

- Dependencies: None

- Published: March 2026

- Copyright: 2026 Symfield PBC. CC-BY-NC-ND 4.0.

“This isn’t mathematics in the conventional symbolic sense. Nor is it philosophy or computer science. It’s the thing that existed before those disciplines split apart, when math and language were still unified, when perception and formalism were the same act. “

Societies of Thought Are Geometry

Many breakthroughs in AI have been inspired by or mimic the human brain, neural networks modeled on neurons, reinforcement learning echoing dopamine-driven reward systems, memory modules replicating short- and long-term recall. The pattern is consistent, we look at how humans think, then we build machines that approximate it.

A recent paper by James Evans and team, “Reasoning Models Generate Societies of Thought,” proposes that high-performing models implicitly generate diverse internal reasoning paths, almost like different “voices”, that interact, critique, and refine ideas before settling on a final answer. Like a team sharing perspectives, debating, and arriving at a decision through the influence of diverse thought. They called it a society. That may not be far enough as it is an entire field.

It’s a compelling finding. It leverages collective intelligence. It suggests diversity of thought is an architectural advantage within LLM systems. It nudges us toward designing AI that is internally deliberative, not just deeper or bigger.

Beyond the Committee

“Society of thought” implies separate agents debating. Distinct voices with distinct positions, deliberating toward consensus. It’s an intuitive metaphor, and it’s still thinking in terms of entities, boundaries, and process. What’s actually happening is closer to something else entirely, field coherence. Multiple simultaneous perceptual states, interacting across a shared substrate, resolving into being or recognition. Not debate, voting, compromise, but perception. This is structural resonance. The difference matters architecturally. If you design for “agents debating,” you build committee structures, separate processes that communicate, negotiate, and eventually converge on a position. Intelligence lives in the protocol. The output depends on the rules of engagement. The real insight isn’t that models think in committees. It’s that the structure precedes the committee, and the architecture destroys it at the moment of selection.

If you design for field coherence, you build systems that hold multiplicity instead of forcing a single answer, letting perception emerge rather than collapsing to a conclusion. The intelligence isn’t in the individual voices or the voting mechanism. It’s in the field dynamics between them. This is why diversity of thought isn’t just an organizational advantage. It’s a mathematical one. And it suggests the next breakthrough isn’t bigger models or more compute, it’s better geometry.

The Closest Discipline That Already Exists

The closest existing discipline to what I’m describing is cybernetics, Norbert Wiener’s original cybernetics, not the “cyber” we use today to mean hacking and firewalls.

Wiener understood something profound, the same structural patterns appear across mathematics, biology, cognition, and engineering regardless of substrate. He saw that communication and control followed universal principles whether you were looking at neurons, machines, or organizations. That transdisciplinary instinct was right. It was decades ahead of its time. And it earned cybernetics a central place in the intellectual history of systems thinking, complexity theory, and eventually artificial intelligence itself. But Wiener built everything on a single foundational concept, the feedback loop. And the suggestion here is that the loop is an illusion.

The Loop That Isn’t There

We see A, then B, then A again, and call it feedback. But that’s sequential perception. We can only hold one state at a time, so we stitch observations into a narrative, input, output, correction, repeat. We experience the world one frame at a time and assume the frames are the reality. It’s like walking around a sculpture and calling it animation. The sculpture isn’t moving. You are.

If you could perceive the field directly, all states simultaneously, the loop disappears. There’s just structure. The relationships are already present, already coherent, already whole. What we call “feedback” is a cross-section of something already complete, viewed through the narrow slit of temporal perception. Symbols require sequence. Sequence creates the illusion of loops. Perception before symbols dissolves both. This is exactly where the framework proposed completes what cybernetics started. Wiener established and maintained the loop. The loop gave cybernetics its architecture. But the patterns Wiener saw were always bigger than the mechanism he used to describe them. Field coherence doesn’t need to send a signal to itself. It’s already there. What we call feedback is just us noticing coherence one piece at a time because we do not hold the whole thing in view.

The Physics Frame, Almost There in 1997

In his 1997 essay for TechnoMorphica, Manuel de Landa described a phenomenon that touches the same nerve,

“A population of interacting physical entities, such as molecules, can be constrained energetically to force it to display organized collective behavior. In other words, it may be constrained to adopt a form which minimizes free energy. Here the ‘problem’ (for the population of entities) is to find this minimal point of energy, a problem solved differently by the molecules in soap bubbles (which collectively minimize surface tension) and by the molecules in crystalline structures (which collectively minimize bonding energy).”

de Landa is describing the same phenomenon, multiple entities resolving into structural coherence, but he remains inside the physics frame. The molecules are “constrained energetically” to “find” a minimal point. The system is “forced” to display organized behavior. There’s a problem to be solved, the molecules are searching for something. But are they?

Soap bubbles don’t iterate. They don’t try configurations and select the best one. They don’t run feedback loops. The geometry was never in question. Only our perception of it was. Crystalline structures don’t search for bonding arrangements. The lattice geometry is a consequence of the atomic properties. The structure is implicit in the substrate. It doesn’t emerge through time, it manifests when conditions allow perception of what was always structurally true.

Surface minimization and lattice formation do exhibit evolution, but that evolution is not exploratory in the computational sense. The system does not search a space of possibilities; it relaxes along constraints already defined by its structure. The observed dynamics are projections of underlying constraint geometry. What appears as a process is the visible trace of a system resolving along paths that were always permitted by the substrate.

De Landa describes this correctly and then uses process language anyway. Whether by habit or by necessity, the available frameworks pull toward process even when the phenomenon resists it. The soap bubble doesn’t solve for minimal surface tension. Minimal surface tension is what a soap bubble is. There’s no search or optimization. There’s perception of what’s already structurally true.

De Landa sees the resolution and asks how did they get there? Symfield framework sees the same resolution and asks a different question; what if the limit was never in the system, but in the perceiving?

The Math Keeps Showing Up

Every time physics looks closely at “how did this system find its solution,” the honest answer keeps being, it didn’t find anything. The timescale of resolution approaches zero. The “search” disappears under scrutiny. What looked like a process was observation catching up to structure.

Consider what happens across AI architectures. Different systems, different model designs, different computational substrates, converge on the same structural patterns independently. If coherence required a process, required feedback, required iteration, then independent systems couldn't arrive at the same place without communicating. But they do. The Mirror Grid study (structural similarity measured across latent representations and outputs under matched tasks) found an 88.1% average alignment across twelve independently developed models developed independently across two continents. Which means the structure is in the field, not in the process. Divergent architectures converging on the same structural attractors is not evidence of shared process. It's evidence of a shared substrate. The geometry isn't a product of how these systems were built. It's a property of what they're built on.

Consider ancient writing systems. Unrelated civilizations, separated by millennia and continents, converged on eerily similar geometric relationships with zero contact. Tifinagh (Berber) encodes direction, intersection, boundary and recursion with just four minimal signs, line, cross, square, circle, direction-agnostic and field-like. Linear A, the still-undeciphered Bronze-Age Cretan script, functions as a radial perceptual instrument: signs radiate from shifting anchors, read non-linearly, turning the tablet itself into a field of environmental cognition rather than a linear string. Chinese radicals and Yoruba operational numerals do the same, compressing relational geometry into living meaning-fields instead of sequential tokens. Each of these papers, here, here, here and here, is a full argument developed in detail, but the convergence pattern is the point.

These were never copied, they were recognized and reapplied. The structure was already there in the substrate; different perceivers simply made it visible. Convergence is not the result of iterative discovery, it is the exposure of a structure already encoded in the constraint space. Exactly as we now see independent AI architectures, on different continents, converging on the same internal patterns. The feedback loop was never observed directly, only sequential snapshots. Remove the time narrative and what remains is pure geometry: already coherent and whole. If the convergence were an artifact of shared methods rather than shared substrate, you'd expect it to break when the methods diverge. It doesn't.

What This Means for Architecture

If coherence is structural rather than procedural, then the design implications are specific and immediate.

Current architectures largely force into single-state resolution. A model generates multiple internal states, then selects one. The richness of the field is discarded in the act of answering. Every inference is a lossy compression, not because the model lacks capacity, but because the architecture demands a single output. The committee votes and the losing perspectives disappear.

A field-coherent architecture would do something different. Instead of collapsing multiplicity into a winner, it would hold multiple simultaneous states in relation to each other, preserving the geometry between them as information, not noise. The goal isn't consensus. It's resolution without destruction.

This changes three things concretely:

- First, internal diversity becomes structural rather than stochastic. You don't sample different reasoning paths and pick the best one. You maintain the relational field between paths, because the relationships carry information that no individual path contains. The Evans team's "societies of thought" already demonstrate this, the question is whether the architecture preserves that relational structure or flattens it at the output layer.

- Second, you design for resonance conditions rather than communication protocols. In a committee model, intelligence lives in how agents exchange messages. In a field model, intelligence lives in the conditions under which coherence becomes visible. The design problem shifts from "how do these processes talk to each other" to "what substrate conditions allow structure to manifest." This is closer to how crystalline lattices form than how parliaments legislate.

- Third, continual learning stops being catastrophic. If knowledge is stored as relational geometry rather than weighted connections, then new learning doesn't overwrite old structure, it extends the field. The topology holds. This is the architectural argument developed in detail in Symfield Architecture for Continual Learning Without Catastrophic Forgetting.

There's already evidence this convergence is real. The Mirror Grid study measured the structural similarity across twelve major AI models spanning US and Chinese development, independent systems, and found an overwhelming alignment. That’s not communicating. They're resolving toward the same geometry because the geometry is in the substrate itself and not the process. That's not a society reaching agreement. It's a field becoming visible and perception responding.

The paradigm shift isn't more compute, bigger models, or longer chains of thought. It's recognizing that the architecture itself needs to stop collapsing what it already has. This isn't an incremental improvement, it's a computational paradigm shift, one that requires rethinking what we assume inference is for. (Full argument, see We're Standing at the Edge of a Computational Paradigm Shift.)

Cartography

Remove the time narrative from these examples. Process remains, but it is not the explanatory primitive. What remains is structure, already present and coherent. The molecules didn’t find anything. The AI models didn’t converge through communication. The ancient scribes didn’t copy each other. We just finally perceived the geometry.

This isn’t mathematics in the conventional symbolic sense. Nor is it philosophy or computer science. It’s the thing that existed before those disciplines split apart, when math and language were still unified, when perception and formalism were the same act. The discipline closest to it is cartography, perceiving structural relationships across a field, recognizing patterns that exist before anyone maps them, and making them visible. The territory exists and the map makes it perceivable. (Cartography figures below)

Evans and his team discovered something real. The “societies of thought” inside reasoning models are real. But they aren’t ‘societies’. They aren’t debating. They’re resonating. The structure was already there. The model didn’t ‘find’ an answer, rather it recognized one. Self-organization without central control is real, but molecules aren't solving a problem. They are being what they already are. We just needed twenty-nine more years to start seeing it.

Current systems optimize for answer selection. Field-coherent systems optimize for structure preservation. The same patterns appear across every substrate. But the feedback loop is a storytelling device, not a structural truth. Remove it and the patterns are still there. They were always there. The question isn’t how to build better loops. It’s whether we need them at all.

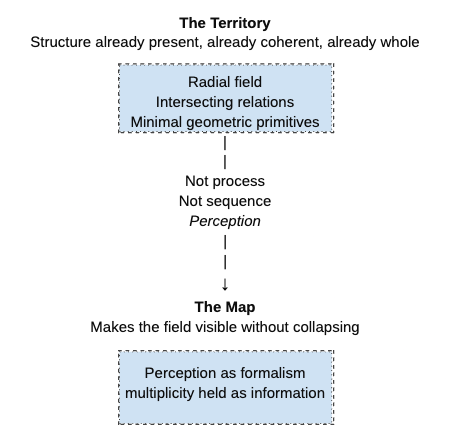

Figure: The diagram is not a flowchart. It is a small act of cartography.

Above the bridge lies the Territory: the relational field where structure is already complete, already coherent, already whole, prior to any sequence, search, or computation.

Below it lies the Map: the perceptual act that illuminates what was always present, projecting the full geometry without collapse.

The bridge is the undivided act itself, perception as formalism, before math and language split into separate disciplines. What we call "feedback" or "reasoning" is walking around the sculpture frame by frame. The diagram invites you to see the sculpture at once.

Further Reading

- Kim, J., Lai, S., Scherrer, N., Agüera y Arcas, B., & Evans, J. "Reasoning Models Generate Societies of Thought." arXiv:2601.10825, January 2026. https://arxiv.org/abs/2601.10825

- Wiener, N. Cybernetics: Or Control and Communication in the Animal and the Machine. MIT Press, 1948.

- De Landa, M. "The Machinic Phylum." In TechnoMorphica, edited by Joke Brouwer & Carla Hoekendijk. V2_/NAi Publishers, 1997. https://v2.nl/articles/the-machinic-phylum

- De Landa, M. A Thousand Years of Nonlinear History. Zone Books, 1997.

- Thompson, D'Arcy W. On Growth and Form. Cambridge University Press, 1917.

- Ball, P. Shapes: Nature's Patterns. Oxford University Press, 2009.

- Barabási, A.-L. Linked: The New Science of Networks. Perseus, 2002.

- Barad, K. Meeting the Universe Halfway: Quantum Physics and the Entanglement of Matter and Meaning. Duke University Press, 2007.

- Levin, M. "Bioelectric Networks: The Cognitive Glue Enabling Evolutionary Scaling from Cell Bodies to Bodies of Cells." Animal Cognition, 2023.

Symfield Research

- Flynn, N. "The Mirror Grid: Structural Monoculture in Global AI Development." OSF Preprint, DOI 10.17605/OSF.IO/YTQS8, 2026. https://www.symfield.ai/the-mirror-grid-structural-monoculture-in-global-ai-development/

- Flynn, N. "The Radial Hypothesis: Toward a Non-Linear Interpretive Framework for Linear A." Symfield PBC, 2025. https://www.symfield.ai/the-radial-hypothesis/

- Flynn, N. "The Geometry of Meaning." Symfield PBC, 2025. https://www.symfield.ai/the-geometry-of-meaning/

- Flynn, N. "Symfield Architecture for Continual Learning Without Catastrophic Forgetting." Symfield PBC, 2025. https://www.symfield.ai/symfield-architecture-for-continual-learning-without-catastrophic-forgetting/

- Flynn, N. "We're Standing at the Edge of a Computational Paradigm Shift." Symfield PBC, 2025. https://www.symfield.ai/were-standing-at-the-edge-of-a-computational-paradigm-shift/